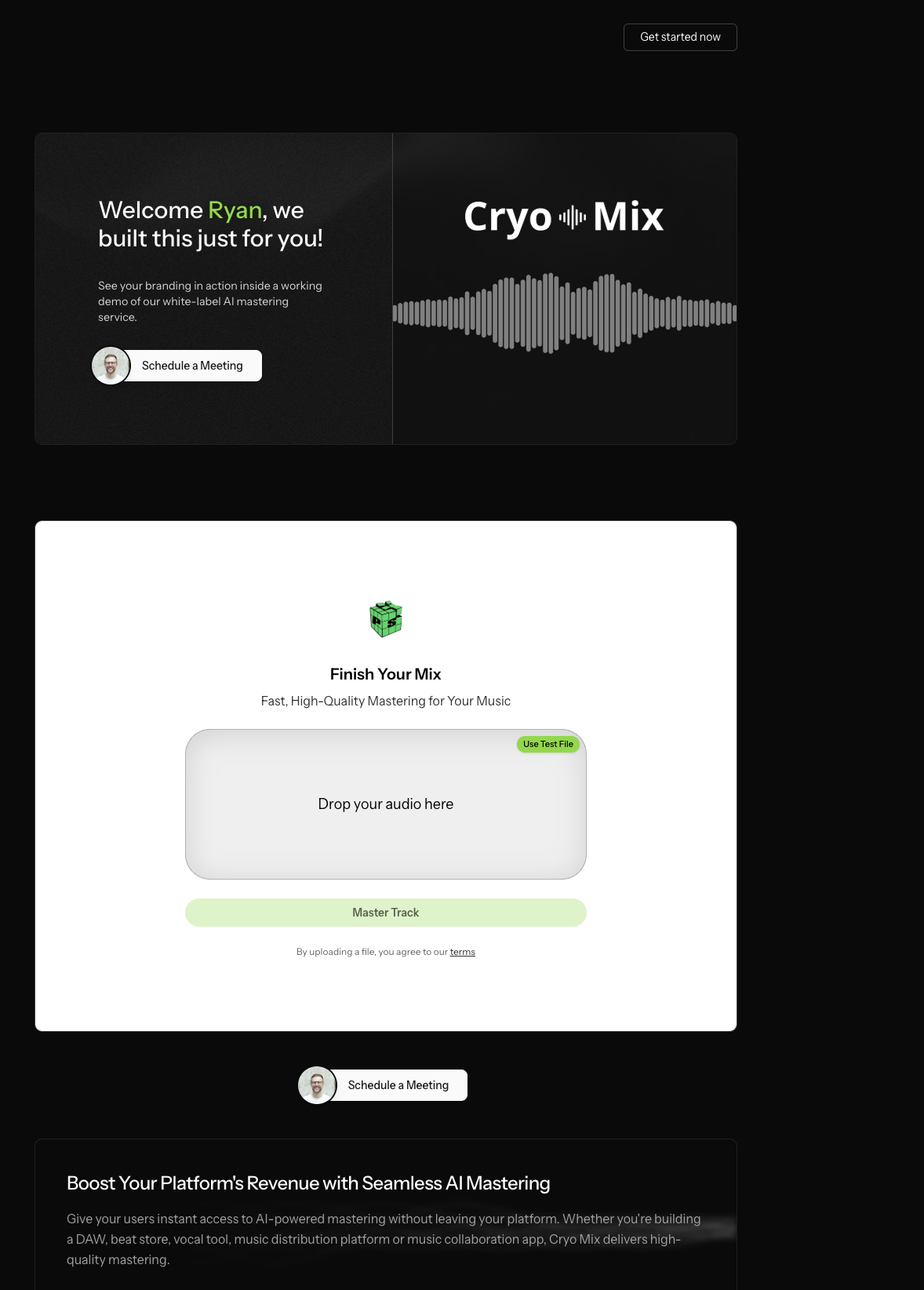

Adi Ranganathan // Producer & Audio Engineer

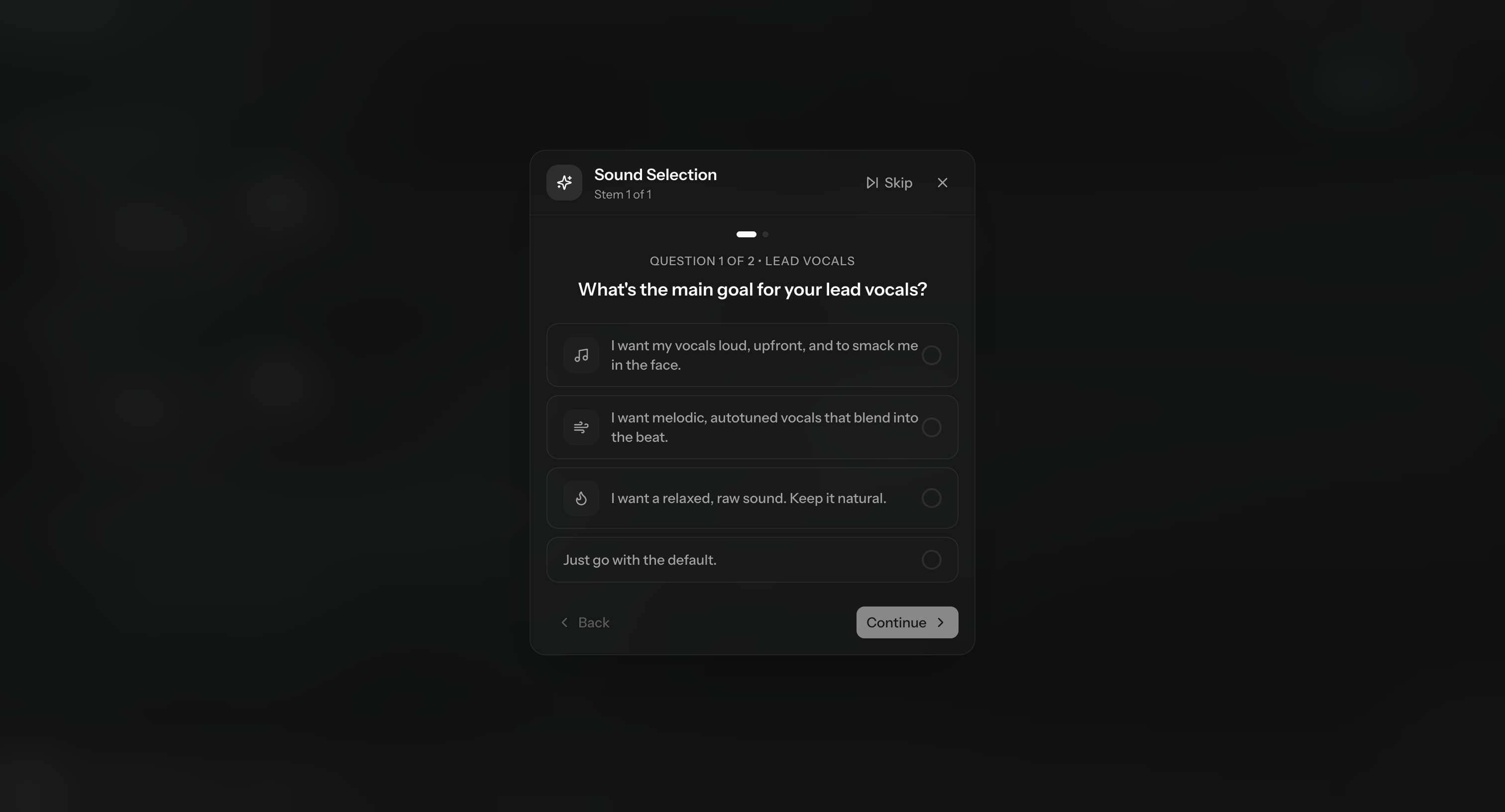

Bridging Creative Intent

with Technical Architecture.

I’m a producer first, and an audio engineer second.

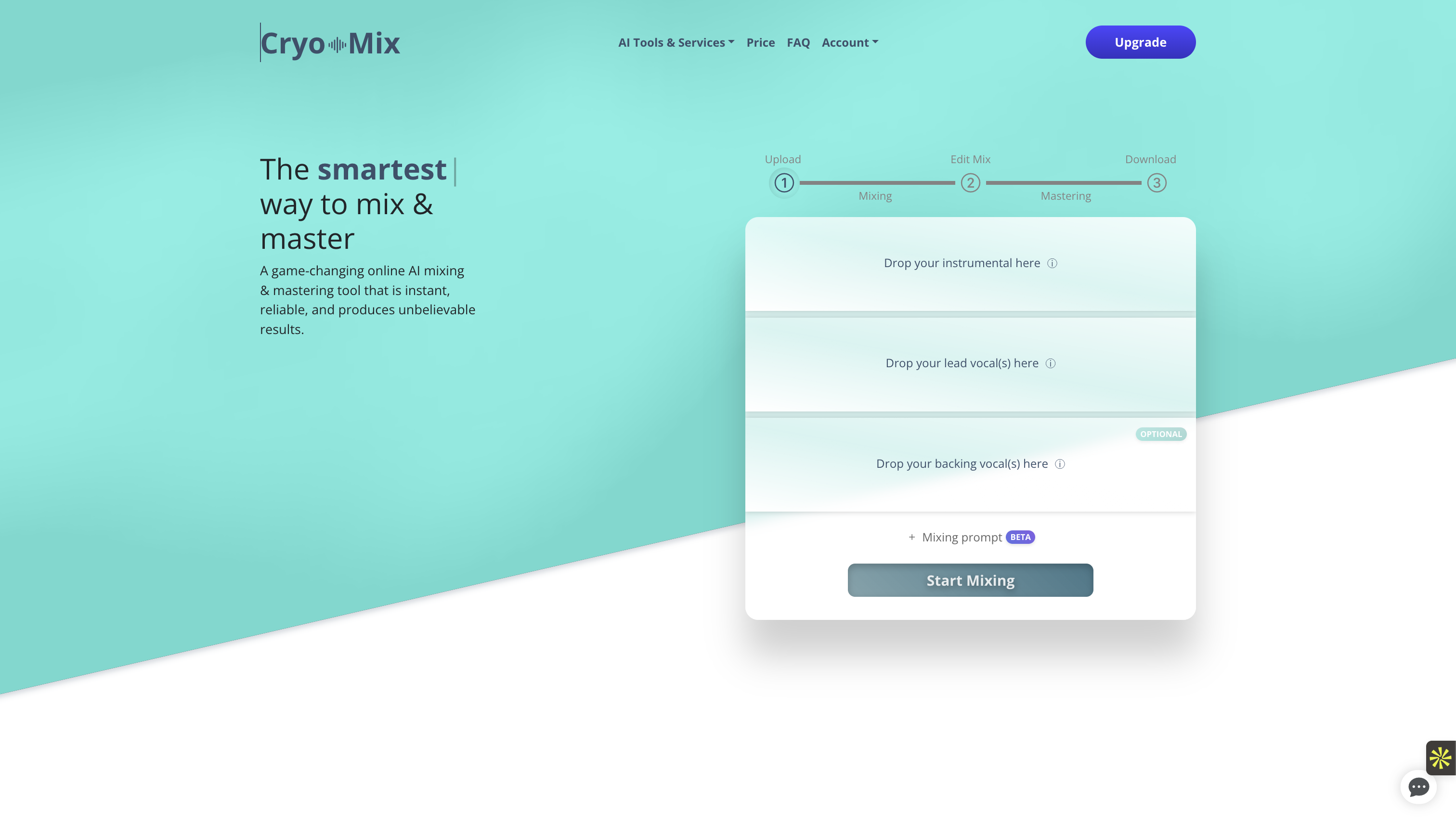

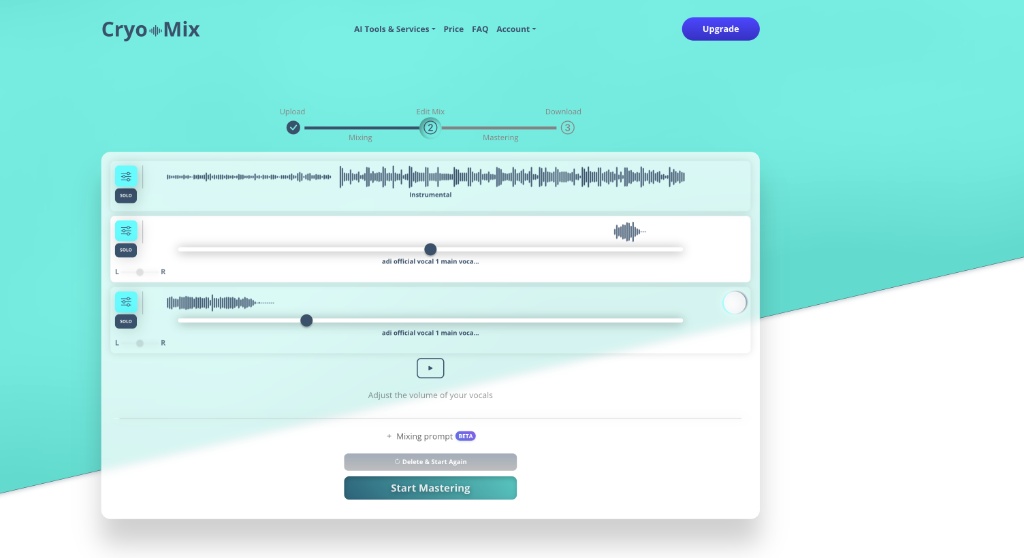

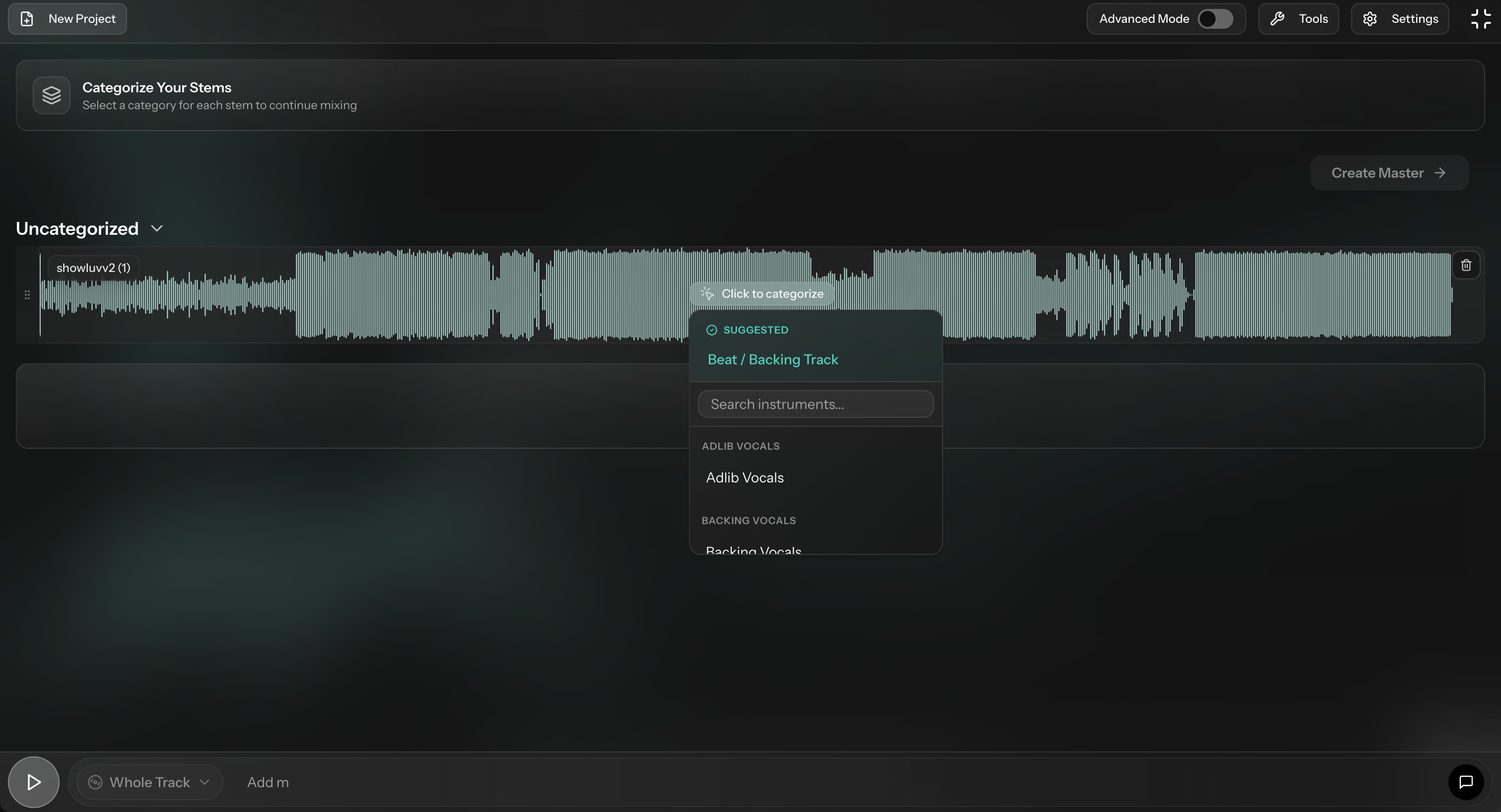

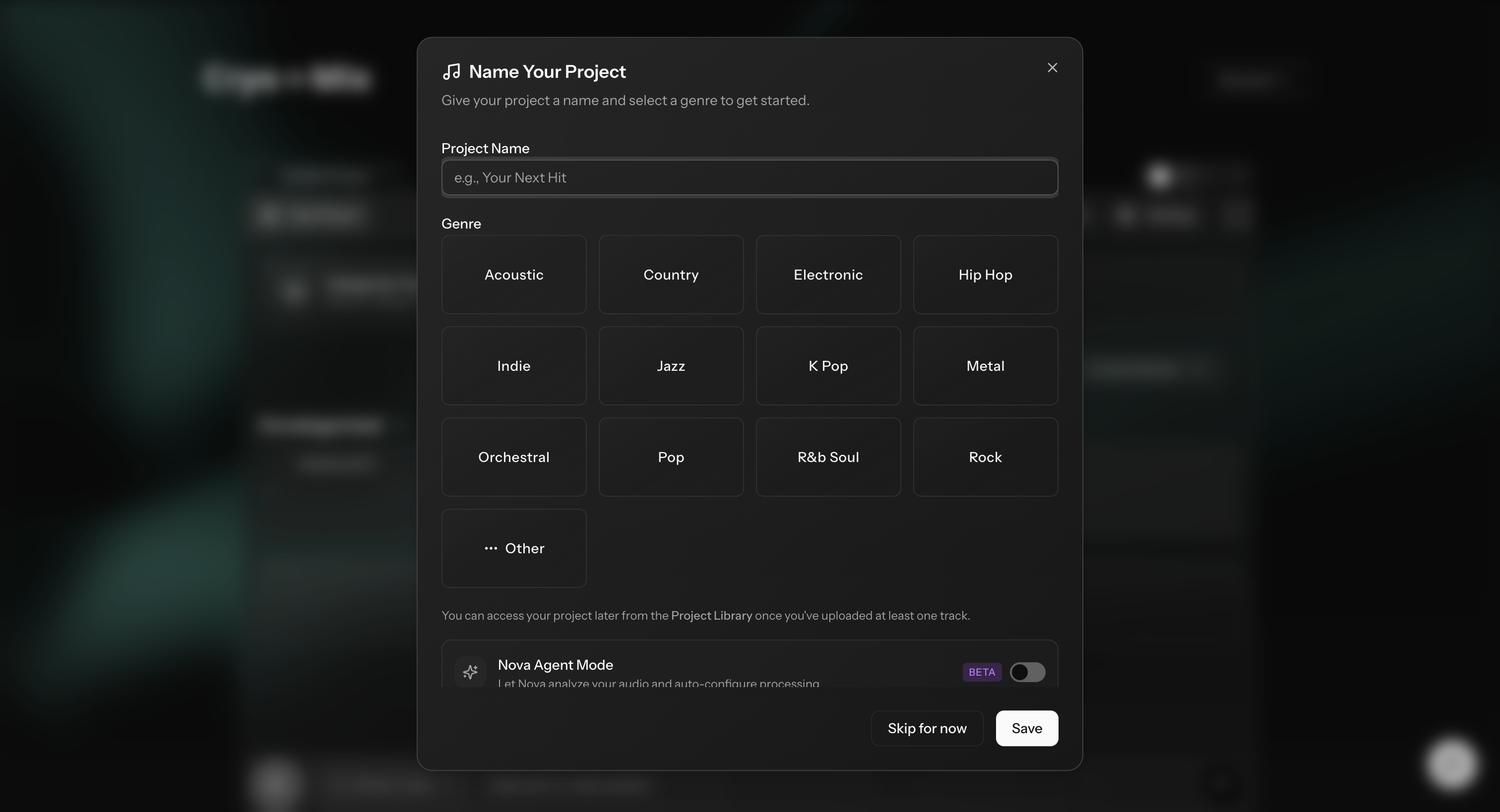

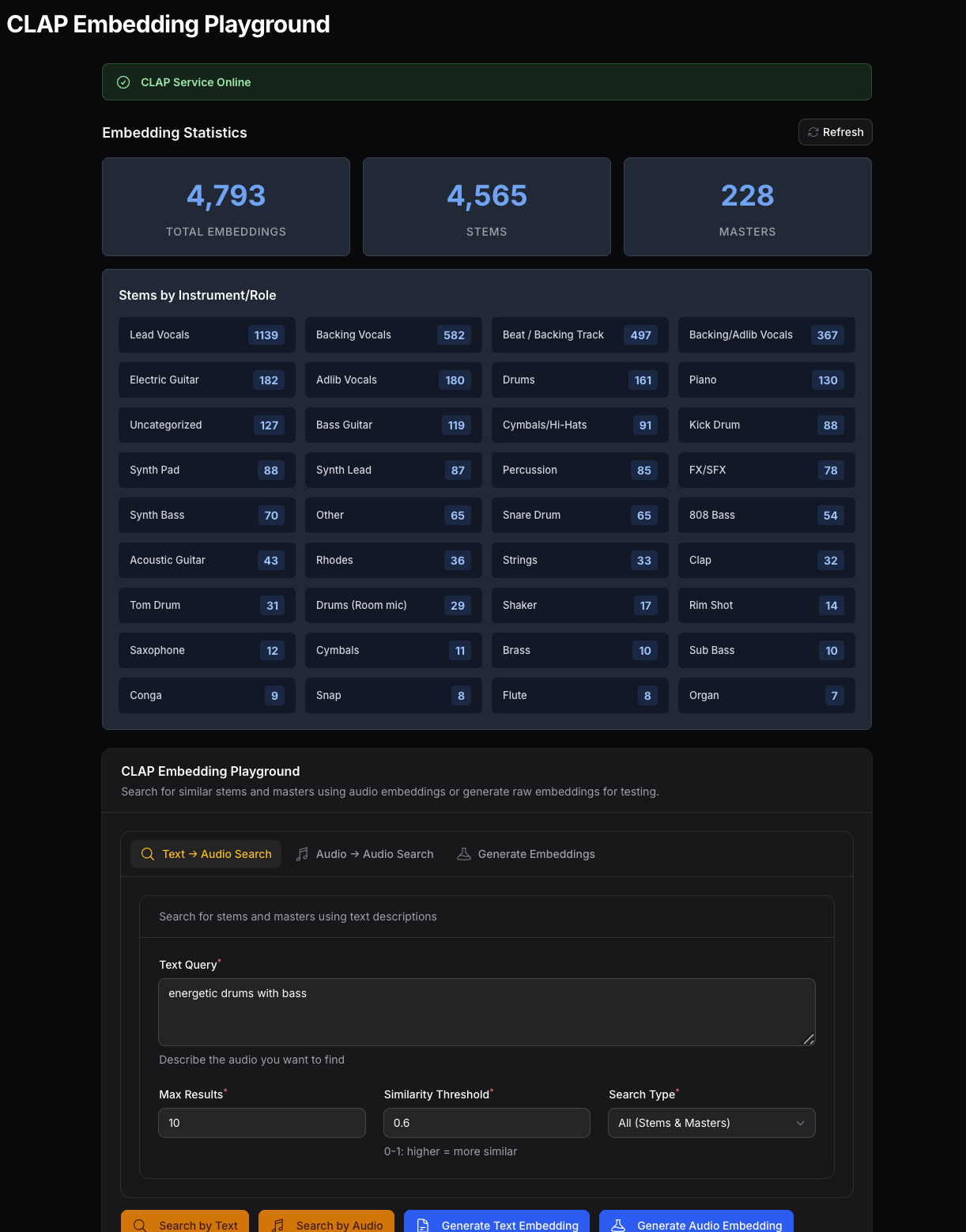

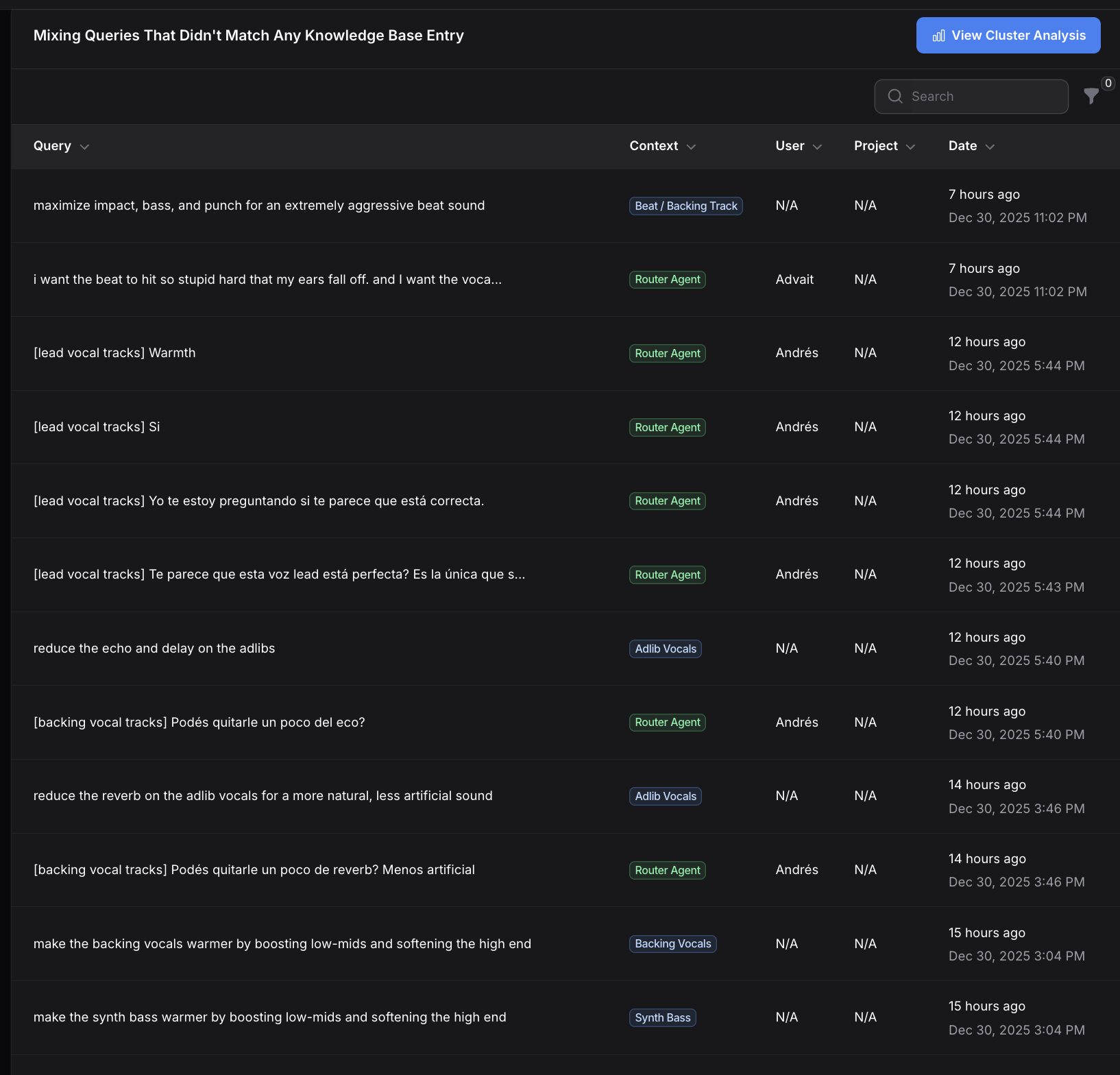

This is the story of how I took Cryo Mix from a flatlined MVP to a scaling AI platform by prioritizing the artist's workflow over raw technical capability.